Also, For All Mankind always finds a way to become even more joyfully unhinged every single season.

Welcome to my home on the web since 2002! The Timeline below contains microblogs, photos, check-ins, movies and TV I've watched, and more. Use the filters at the bottom to explore 16,132 posts over 25 years. On desktop? Try the Time Machine in the top-right corner to travel through history.

Learn more about me and this site →Also, For All Mankind always finds a way to become even more joyfully unhinged every single season.

Only halfway through the first episode of For All Mankind season five, and I’m calling it — they’re going to cryogenically freeze Ed Baldwin.

After the OpenClaw Anthropipocalypse, I have been struggling to find a suitable alternative. Started with OpenAI Codex, and while it matches Opus 4.6’s 1M token context window, it is just not well suited for the use case of orchestration and friendly assistant. It has a tendency to hallucinate and its projected demeanor is… weird. It’s like concentrated Mark Zuckerberg from a personality perspective. Decently good at technical tasks, tho.

I am currently using z.ai with their “Coding” plan, and I’m impressed. GLM-5.1 is remarkably similar to Opus 4.6 in my experience thus far. The 200k token window is tiny, unfortunately, but with some creative use of subagents, it’s manageable. I’ve also kept Codex around for now, modifying my standard operating procedures to encourage the use of Codex subagents for grunt work that requires a large token count.

I’ve started blocking out an hour at the end of the day for prompting — capturing tasks and projects that get codified into Markdown files placed in an “ingest” directory. Then, I run a custom command that tells my agent to create a context fork for each file, autonomously executing each task in a sandbox. Then, I head home. If one of the forks encounters a blocker for any reason, it sends me a message on Signal which I can reply to or ignore.

Back in LA after a lovely Easter week in Palm Desert. Happy to be home, but I’ll miss the pool and the stars! I did receive some fun packages while I was away, including an XTEINK X3 to go with my X4.

I’m so happy Daredevil is back. The O.G. Netflix series was great thanks to lovable characters, a great villain, and John Wick level fight scenes.

Born Again got off to a rocky start in S1, with multiple rewrites, pivots, and chaos that were evident on the screen, but at least it was on screen.

S2 of Born Again has recaptured the magic, with a budget clearly being put to work with well choreographed fight scenes in epic (expensive) settings. We also finally get some bonus heroes that participate.

Fun ride so far!

Black Widow’s brother Brown Daddy Long Legs #shitsuperheroes

Captain Poland. #shitsuperheroes

(Poland is pretty cool, tho)

Private America #shitsuperheroes

Batmiddleagedman. #shitsuperheroes

The Mostly Invisible Man. #shitsuperheroes

The BC-250 PC is in its new home in Palm Desert. My son and I have already had a lot of fun with it, and we've kicked off a Baldur's Gate campaign from scratch now that he's a D&D expert. Fun!

No idea how Mistborn can be adapted into one movie. That’s a lot to fit into a couple of hours, even it just depicts the first book. I expected a TV series with 8-10 episodes per book! Maybe two movies per book? Eventually you’re going to run into The Hobbit problems.

Hosting a dinner tomorrow. Just put an 8 pound bone-in pork shoulder on the Big Green Egg with my trusty (old) Stoker BBQ controller. I shot the URL to the monitoring page over to my OpenClaw agent and they’re gonna keep an eye on it while I sleep. My first AI monitored BBQ!

That was such a fun race! So happy for Kimi, and my boy Charles had the most impressive drive. George has an objectively faster car, and still got his ass beat for 20 laps. #F1v

This is one of the better examples of how much luck influences an #F1 race. And it’s not over yet. I have a feeling there may be another safety car…

Ollie! No!! 💔 #F1

Okay, I’m no longer appending permalinks to my syndicated microblog posts. Been meaning to get around to it, and today is the day thanks to OpenClaw 🦞🤖

Finally home after long week of travel. Buffalo NY, Detroit, Washington DC… I’m exhausted.

Anthropic’s expansion of token limits, scaling from 200k tokens to 1M tokens, is huge. It’s enabling me to engage at a deeper level during brainstorms, and allows the model to fit more code into the context window.

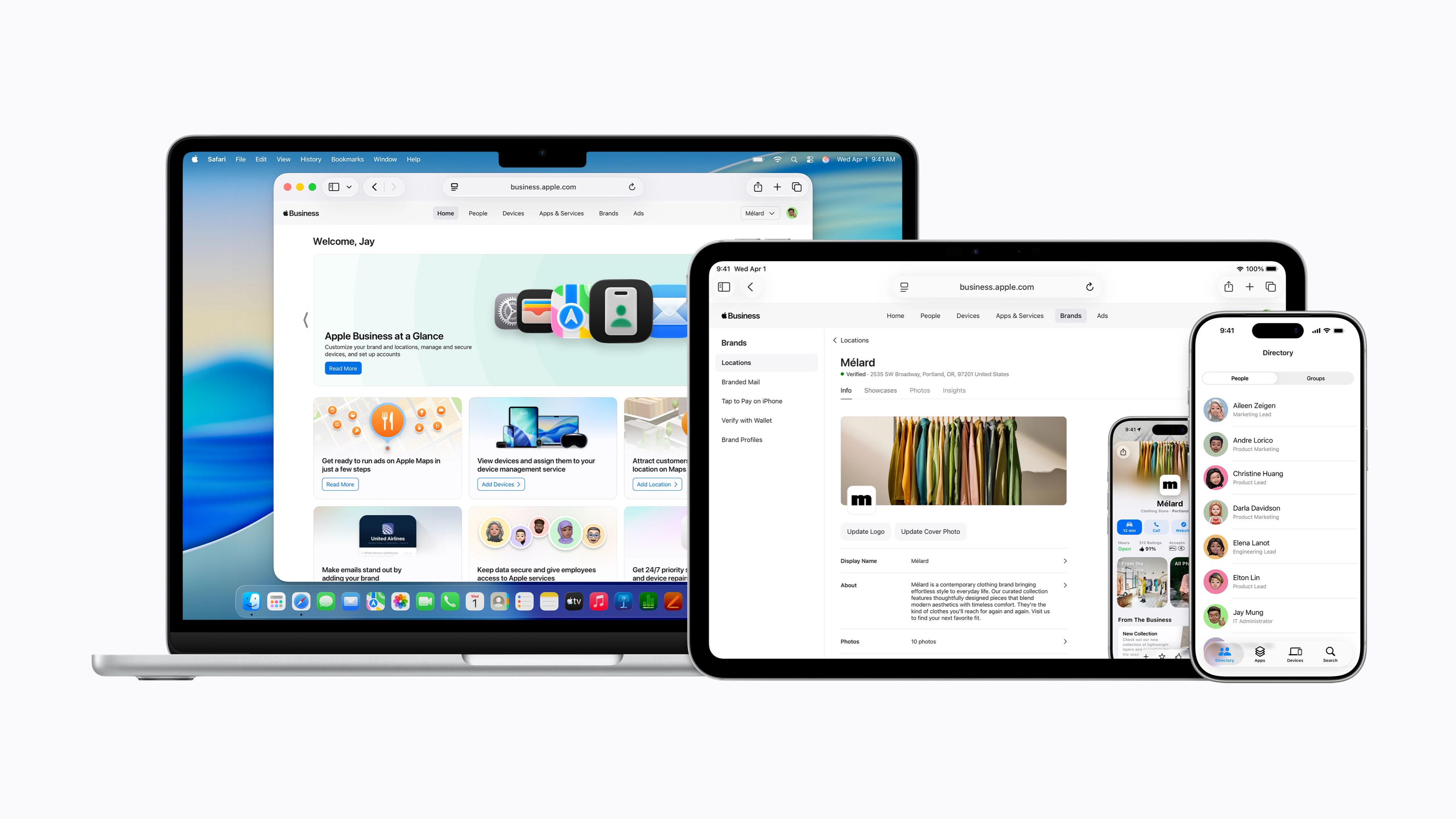

MacRumors’ coverage of Apple’s new business program includes this gem:

The platform introduces Managed Apple Accounts with what Apple describes as "cryptographic separation" between personal and work data, allowing employees to use the same device for both purposes without commingling information. Account provisioning can be automated through integrations with identity providers such as Google Workspace and Microsoft Entra ID.

For those of us who work for large enterprise, this would be a godsend, making it much easier to comply with IT restrictions and access personal Apple services at the same time. Cool!

I’m a SoCal resident in Buffalo, NY in March and I’m regretting my life choices. Holy crap it’s cold!

Watched "Marty Supreme" earlier this week. It was definitely a fun watch, but you really have to be willing to accept Marty as an anti-hero, otherwise you'll just be pissed off the entire movie.

Loading more days...

A collection of moments captured over the years.

I'm a techie, tinkerer, and father living in beautiful Southern California. My day job is being a smartypants technologist at a tech company, where I get to play with cool stuff. This is my personal website, which has been producing hot, fresh content off and on since 2002. Let's talk about that a bit, because my site is a bit of a unicorn thanks to its longevity.

Back in the day, it was cool to have a blog. GeoCities, Tumblr, Drupal, and other platforms were all the rage. Back in 2002, I was finishing up my time at Georgia Tech and decided to study abroad for my last semester. I created the site to chronicle my journey with the legendary Movable Type. I would write on my prized Titanium PowerBook using a local install of Movable Type, generate the static files, and use a custom Python script to upload new content whenever I could find an Internet Cafe (yes... that was a thing). It was joyous! Blogrolls, web rings, RSS feeds... the web was so much fun.

Social media has been both a blessing and a curse. Over time, that line has shifted sharply into cursed territory. At first, social media platforms enabled people to have a presence on the web with very little effort, but also very little customization and control. The thing that really kicked the social media silos into high gear was the social part of social media – the ability to interact with friends and family and share photos, creating replies, and "reposting" and "liking" interesting content.

But, social media has been a bad thing for the social web. These silos come and go, taking people's history with them. They're also monetized through advertising and the sale of personal information for targeting. Their algorithms are tailored to keep users engaged so that their eyeballs see as many ads as possible. As a result, social media silos are often hotbeds of hate, misinformation, and divisiveness. Outrage is a highly motivating way to keep people engaged, and the world is worse off for it.

Its been a long time since the personal social web has been a thing. Silos have effectively killed blogging, which is a shame, because personal websites aren't about "engagement" or making money by selling people's information. They're about being yourself, holding onto memories, and connecting with others.

Back in 2013, I joined DreamHost to lead Software Development. DreamHost is a special place, with lovely people, a great culture, and a mission to help people own their digital presence. DreamHost hadn't given up on the social web, but they also hadn't figured out how to re-ignite it. I spent a lot of time searching for pockets of activity on the social web, and attempting to coax those embers into bonfires. That work took me into the WordPress community, where DreamHost has an incredible WordPress user base. But, then I truly found my community – the IndieWeb movement.

The IndieWeb is not just a community, its a movement. It describes itself as "a people-focused alternative to the "corporate web," emphasizing that "your content is yours," "you are better connected," and "you are in control." These were my people. These are my people.

With an intersection between my work and the IndieWeb community, I kicked off a project to repatriate my data from Instragram, Facebook, and Twitter, downloading all of my information, closing my accounts, and re-hosting it all on a new website. I also grabbed all of my content from my legacy websites dating back to 2002, centralizing it all into a site that was truly mine.

At first, I used an open source, IndieWeb CMS called Known. Known served as a wonderful introduction to IndieWeb standards and principles like POSSE, IndieAuth, micropub and microformats 2.

My site fully reconnected me to the open social web, free from the encumbrances of surveillance capitalism and bad actors like Meta and X, both of which are owned by terrible people with no moral or ethical compass. I was posting regularly, with photos, short-form microblog posts, articles, and even reviews, bookmarks, and likes. I started tracking my location 24/7 using my phone, saving it all to a private repository. I syndicated my content out to Twitter for a long time until I finally gave up on it, and now I syndicate to new, more open platforms like Mastodon and BlueSky.

After a really promising start, Known ultimately stagnated. There is still a small community maintaining it, creating plugins and keeping it up to date with evolving standards, but its not really a platform for the future anymore. Plus, it uses technologies that are not really in my wheelhouse, like PHP, and it requires big beefy relational databases to store its content.

In 2023-2024, I started thinking about what was next for this site. I had a desire to catch up to modern web standards, adopt my favorite programming language, Python, and make hosting cheap, simple, and easy. What you see today is the result of a few years of off-and-on work on my new CMS, Dwell.

Dwell is a powerful, but simple CMS built in Python. It stores all of its content in JSON files on disk, and uses the excellent, tiny, and powerful DuckDB database engine to enable lightning fast queries. Dwell is currently not open source. It was briefly, but I was changing things so quickly that I didn't really want to attract a community that I would need to manage. That may change in the future, but for now, I am pretty satisfied with the result.

Dwell is ultimately a repository for memories. The breadth and depth of the content is special, and I wanted to be able to savor the special moments in my life; to keep myself grounded in what matters most – family and friends and our shared memories. When I was searching for inspiration for the design and architecture of Dwell, I found it in a surprising place.

There is a now legendary episode of the hit AMC show Mad Men entitled The Wheel. The episode focuses on an advertising campaign pitch to Kodak, who was preparing to release a new slide projector. The main character, Don Draper, gives a stunning pitch that is worth watching. In it, Draper leans into the power of nostalgia:

Technology is a glittering lure. But there is the rare occasion when the public can be engaged on a level beyond flash, if they have a sentimental bond with the product.

My first job, I was in house at a fur company with this old pro copywriter, Greek, named Teddy. And Teddy told me the most important idea in advertising was ‘new.’ Creates an itch. You simply put your product in there as a kind of calamine lotion.

But he also talked about a deeper bond with the product: nostalgia. It’s delicate, but potent. Teddy told me that in Greek nostalgia literally means ‘the pain from an old wound.’ It’s a twinge in your heart, far more powerful than memory alone.

This device isn’t a space ship. It’s a time machine. It goes backwards, forwards. Takes us to a place where we ache to go again. It’s not called ‘The Wheel.’ It’s called ‘The Carousel.’ It lets us travel the way a child travels. Around and around and back home again to a place where we know we are loved.

Don's monologue is easily the best articulation of the power of nostalgia and memories. Living life to the fullest means treasuring every moment. This website is not a wheel, its a time machine. The main part of this site is the timeline, which contains nearly every piece of content I've ever created on the internet. At the top right of the timeline, you'll find a circular widget. As you scroll forward and backward, you'll see the indicators in the three rings move along with you, as the rings represent years, months, and days. If you click the widget, you'll enter a time machine that allows you to jump to any position in the timeline, discovering my life experiences, travels, and more.

On the bottom right of the screen, you'll sometimes see a "mini map." As you scroll, the map will update, following my location at each position in the timeline. You'll find monthly and yearly summaries, and a special filter in the navigation bar that allows you to decide what types of content you'll see. If you're a person that still uses a feed reader, check out my feed generator, it allows you to subscribe to your own customized feed.

After several years of development, I am really happy to have a new home. I hope you enjoy it here.

It’s a sad day for those of us leveraging our Claude fixed-price plans with OpenClaw. I don’t blame Anthropic one bit. It wasn’t a sustainable situation, but it sure was fun while it lasted.

One thing that is not clear to me — at my current level of usage, what would my bill look like for a month?

Time to immediately consider buying another M4 Mac Mini, or a Mac Studio that can replace my current primary Mac Mini.